Learning Python with Spark Framework

A Comprehensive Guide to Mastering PySpark

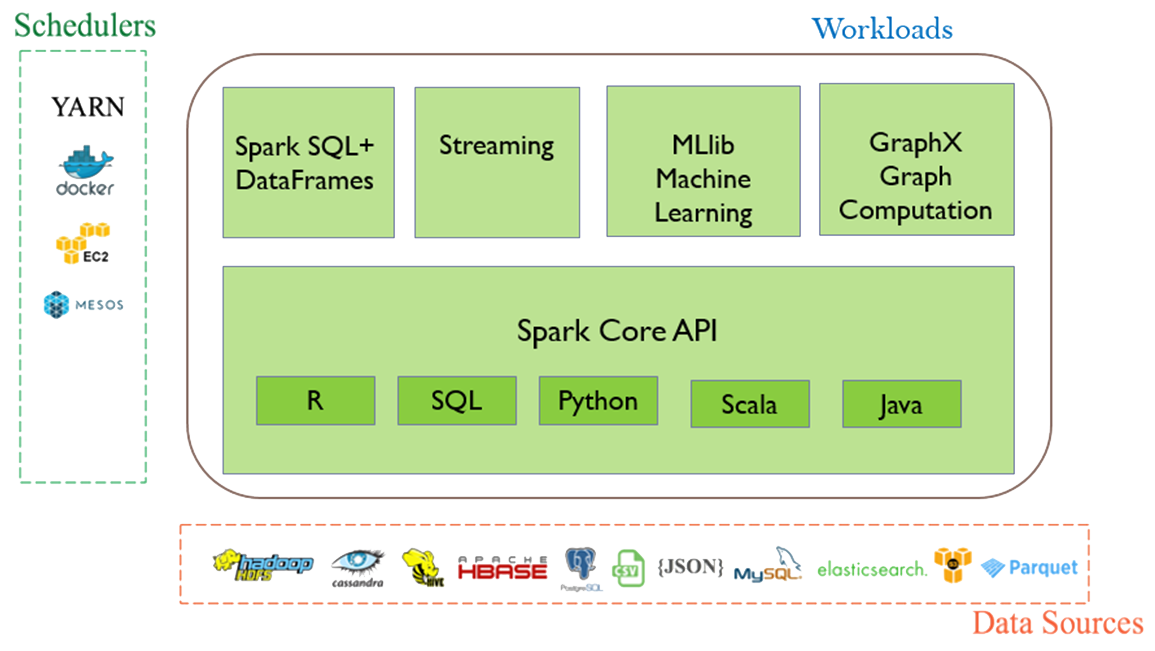

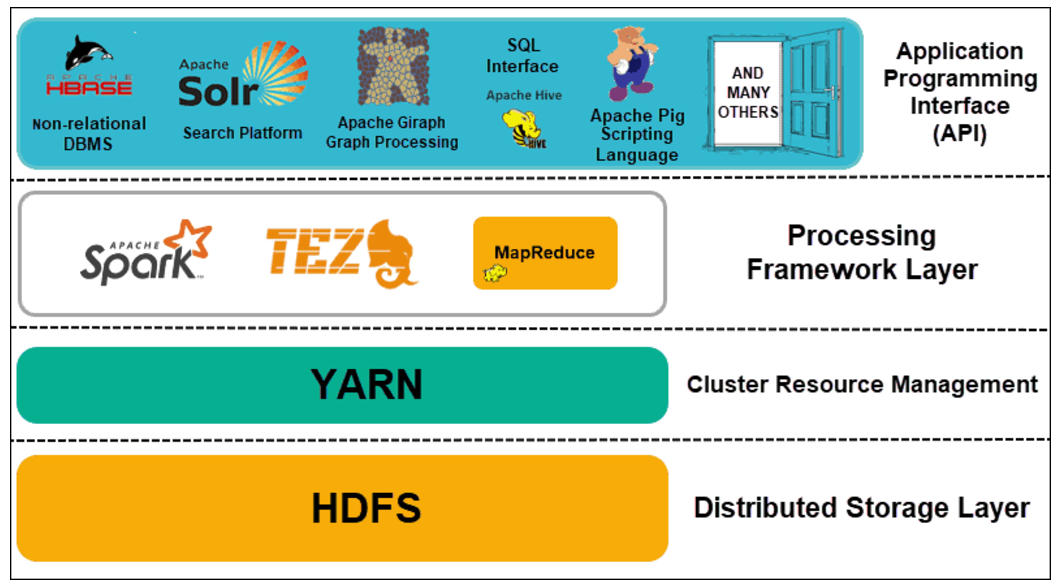

Apache Spark is a unified analytics engine for large-scale data processing that has gained immense popularity in recent years. Its high-level APIs in Java, Scala, Python, and R make it an ideal choice for data scientists and engineers. In this article, we will delve into the world of PySpark, the Python API for Apache Spark, and explore how it can be used for big data processing and machine learning tasks.What is PySpark?

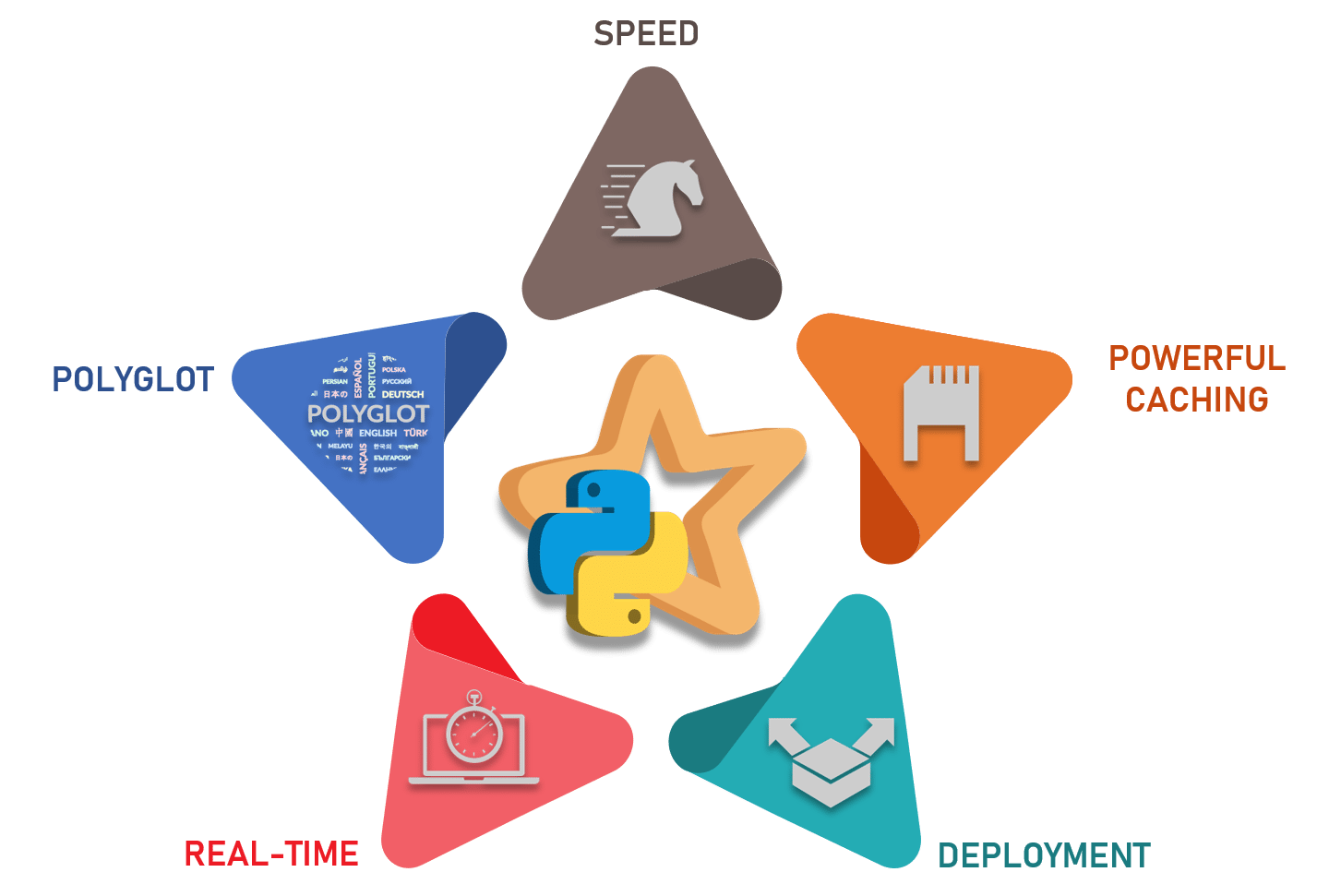

PySpark is an interface for Apache Spark in Python. It allows you to write Python and SQL-like commands to manipulate and analyze data in a distributed processing environment. Using PySpark, data scientists can manipulate data, build machine learning pipelines, and tune models. Most data scientists and analysts are familiar with Python and use it to implement machine learning algorithms, making PySpark an ideal choice for big data processing and analytics.Key Features of PySpark

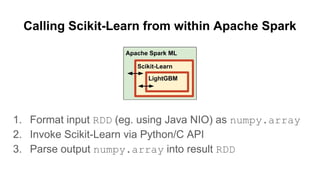

PySpark provides a rich set of features that make it an ideal choice for big data processing and machine learning tasks. Some of the key features of PySpark include: * High-Performance Computing: PySpark uses the Spark engine to provide high-performance computing capabilities, making it ideal for large-scale data processing tasks. * Data Manipulation: PySpark provides a wide range of data manipulation capabilities, including data filtering, aggregation, and grouping. * Machine Learning: PySpark provides a built-in machine learning library, MLlib, that allows you to build and train machine learning models. * Data Visualization: PySpark provides a range of data visualization tools, including Spark SQL, DataFrames, and Spark Core. PySpark offers a range of benefits that make it an ideal choice for big data processing and machine learning tasks. Some of the key benefits of using PySpark include: * Scalability:

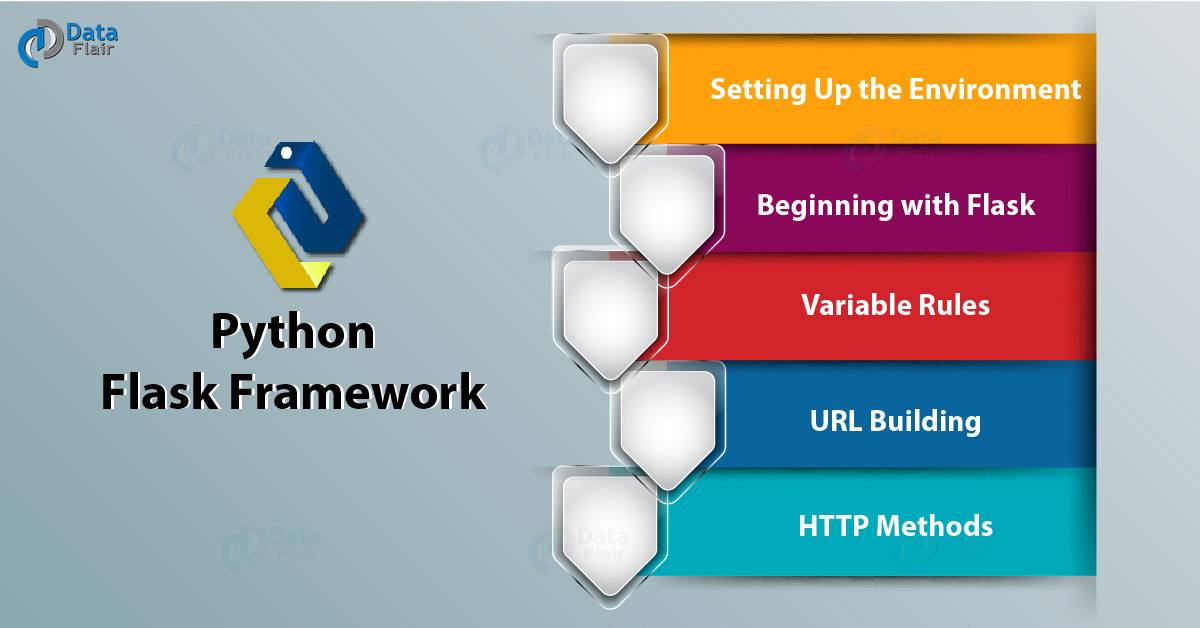

Getting Started with PySpark

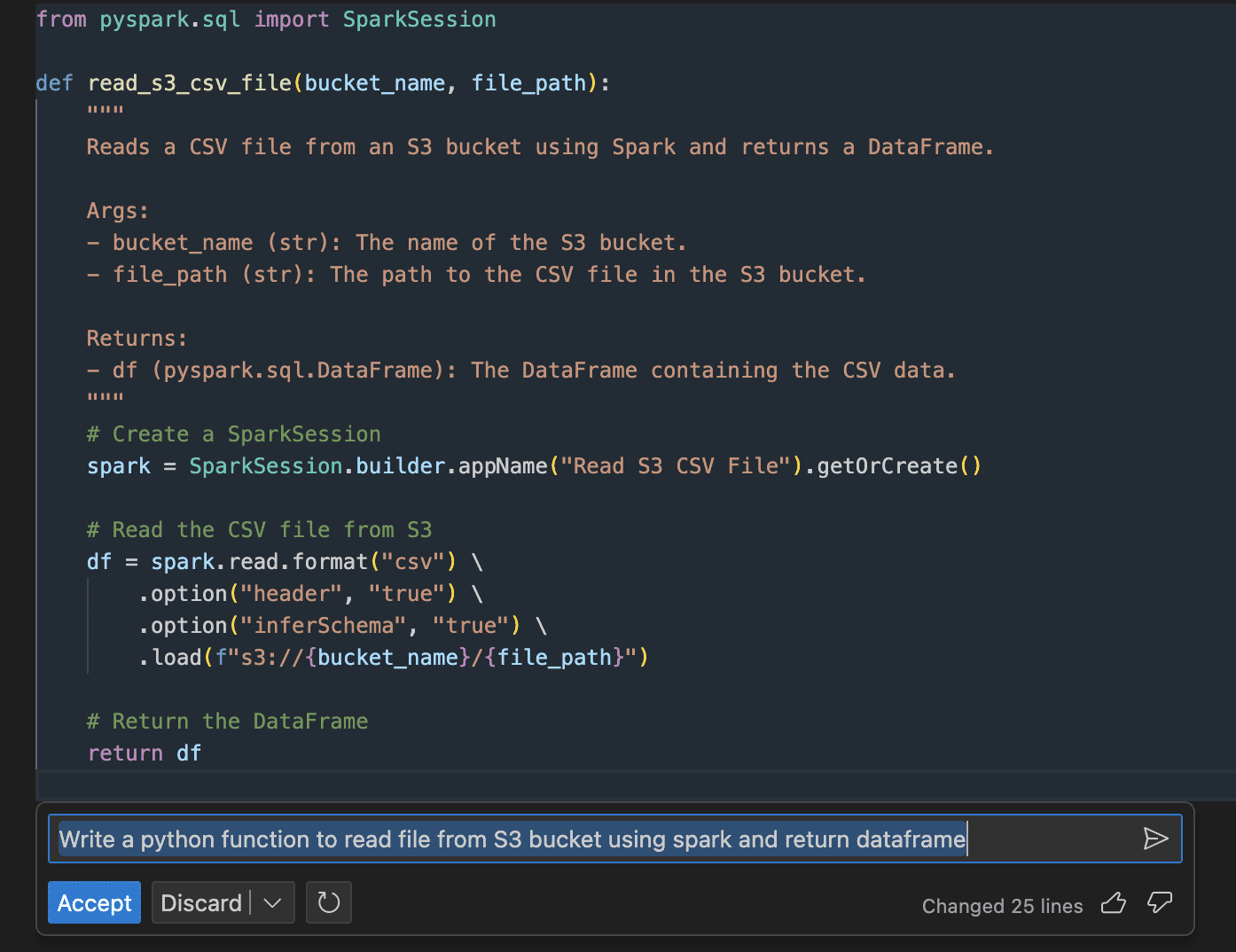

Getting started with PySpark is easy and straightforward. Here are the basic steps required to set up and get started with PySpark: 1. Install PySpark: The first step is to install PySpark on your machine. You can install PySpark using pip, the Python package manager. 2. Import PySpark: Once PySpark is installed, you can import it into your Python script using the following code: `from pyspark.sql import SparkSession` 3. Initialize Spark Session: To use PySpark, you need to initialize a Spark session. You can do this using the following code: `spark = SparkSession.builder.appName("PySpark").getOrCreate()` 4. Load Data: